This one is off-brand for me. No Python, no geochemistry, no machine learning. If you are here for those, next Tuesday we are back to our regularly scheduled programming.

Two recent events have had me thinking, and I wanted to write about them while the thinking was still fresh.

On March 22, an Air Canada Express CRJ900 landing at LaGuardia collided with an airport firefighting truck crossing the runway. Both pilots were killed. On April 15, the Canadian Forces Military Police announced charges against two Royal Canadian Navy members in connection with the January 2025 death of Petty Officer 2nd Class Gregory Applin, whose rigid-hull inflatable boat struck an unlit mooring buoy in Bedford Basin and capsized.

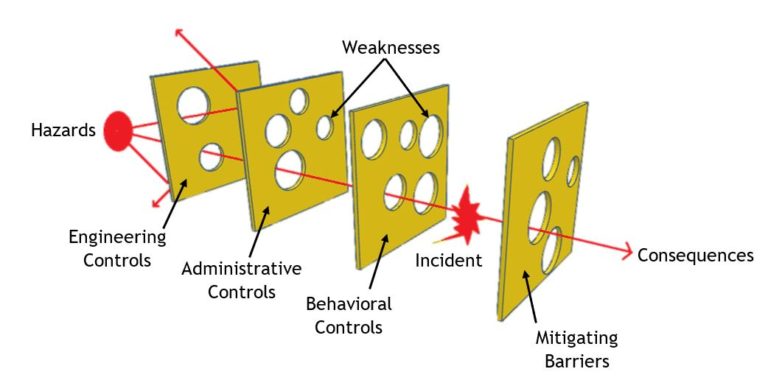

Both investigations are early, or in the Navy's case, now moving into the judicial process. I am not drawing conclusions about either event, and nothing in this post should be read that way. What I want to write about is something the RCN's own senior leadership said in their statement about the charges: that an incident like this almost never results from a single cause or single error, and is most likely the outcome of a combination of factors.

That framing is exactly right. And it runs in the opposite direction from how most of us, in most industries, talk about "human error."

Bridges don't fail from "concrete error"

When a bridge collapses, nobody writes "concrete error" in the report. Nobody blames the steel.

We investigate the load conditions, the design assumptions, the inspection schedule, the environmental exposure, the welds, the original material specifications, and the decisions made decades earlier about how much redundancy to build in. We treat concrete and steel as materials with known properties and known failure modes, and we ask whether the system around them accounted for those properties correctly.

But when something goes wrong involving a person, we reach for a different category of explanation. Human error. User error. Pilot error. Operator error. Crew error.

It is a strange double standard, and I think it is worth pushing back on.

Humans are a material in the system

This is the frame that keeps coming back to me from my coursework in human-computer interaction and systems design. If we take seriously the idea that a workflow, a ship, a cockpit, or an exploration program is a system, then the people inside that system are one of the materials the system is built from.

Human beings have known properties. We get tired. We get stressed. Our attention narrows under pressure. We fill in gaps with assumptions when information is missing. We defer to authority. We read ambiguous signals in whatever way is most consistent with what we expect to see. Our short-term memory is smaller than we think. Our situational awareness degrades in predictable ways when we are handling two positions instead of one.

None of this is a character flaw. It is a material property. And a well-designed system accounts for it, the same way a well-designed bridge accounts for the thermal expansion of steel.

If a system only works when every person in it performs perfectly, every time, under every condition, that is not a robust system. That is a system with a single point of failure, and the failure has been pre-assigned to whichever human happens to be standing there when it finally breaks.

A note from my own experience

I serve as Operations Officer at HMCS Cabot, and one of the things that job made very concrete for me is how much of safety comes down to design decisions made long before anyone gets on the water. Training standards. Checklist design. Communication protocols. Equipment layout. How information flows up and down a chain of command. Whether a junior sailor feels able to push back when something looks wrong.

None of those are about individual bravery or individual attentiveness on the day. They are about the system that individual is operating inside. When we get those right, people can do hard things safely. When we get them wrong, we are setting a trap and then acting surprised when someone steps in it.

The OpsO role taught me that "the crew was tired" or "the procedure was unclear" or "the equipment was out of spec" are not excuses. They are data. They are telling you the system has a property you need to design for.

What this means for exploration

Mineral exploration is not aviation, and a core shack is not a bridge of a frigate. Nobody dies if a lithology gets miscoded, that I'm aware of. But the underlying logic transfers.

Field teams collect data under real conditions. They are often working long hours, in remote locations, in weather that makes fine motor tasks and careful observation harder. They may be junior, still learning the deposit type, still calibrating their eye. They may be under pressure to hit a meterage target. They may be using a logging template that was designed for a different project, or a tablet app that freezes when the battery gets cold.

When the data comes back messy, or inconsistent between loggers, or missing fields, the easy move is to write it off as sloppy work. That framing feels good because it locates the problem somewhere outside of us, in the people who "should have known better." But it does not fix anything. The next crew, or the same crew on the next project, will produce the same pattern of errors, because the system producing them has not changed.

The harder move, and the one that actually pays off, is to treat those errors as information about the workflow. Ask different questions:

- Is the logging template actually usable at 2am after a long shift, or does it assume ideal conditions?

- Are the category definitions unambiguous, or do two reasonable geologists interpret them differently?

- Is the QA feedback loop fast enough that a junior logger finds out about a mistake while it is still fresh, or weeks later when the context is gone?

- Is there a realistic way for a field tech to flag "I am not sure about this interval" without it feeling like an admission of failure?

- Are we training people to the standard the work actually demands, or to the standard it demanded ten years ago when the program was smaller?

- When a new hand joins the crew, do we pair them with someone experienced, or do we hand them a template and hope?

These are the kinds of questions that move problems from "that person messed up" to "our system has a property we need to design around."

Shirking responsibility feels good

I want to be honest about why "human error" is such a comfortable explanation. It is because it lets us stop thinking. Once the error is assigned to a specific person, the investigation has a place to end. The organization does not have to change. The procedures do not have to be rewritten. The budget does not have to be revisited.

That feels like resolution. It is not. It is deferral. The same failure mode is still sitting in the system, waiting for the next person to encounter it.

Treating people as a material with real properties is harder. It means admitting that the system you designed, or inherited, or tolerated, has a shape that predictably produces certain failures. It means taking responsibility for that shape. It means spending money and time on training, on better tools, on clearer procedures, on genuine oversight rather than the appearance of it.

But it is the only approach I have seen actually reduce the rate of recurring problems, in any organization I have worked in or studied.

A careful word about the two incidents

I want to say again, clearly, that I am not drawing conclusions about what happened at LaGuardia or in Bedford Basin. The NTSB and the Canadian military justice system will work those questions, and the people involved deserve a fair process with the full facts.

What I am saying is that the instinct many of us feel, when we hear about an accident, to locate the cause in a single person and stop there, is an instinct worth examining. The RCN's own leadership flagged it in their statement: these things almost never come from a single cause. If that is true at the sharpest end of operational risk, where people are highly trained and procedures are mature, it is certainly true in the kind of work most of us do.

If you lead a team, you are also a systems designer, whether you signed up for that or not. The workflows, tools, training, and culture you build are the materials your people operate inside. When something breaks, the first question worth asking is not who, but what about the system made that failure available.

Humans are a material in the system. Design accordingly.